Relative Importance of Minifaction and Compression - 01 April 2012 - javascript

While having a discussion recently on the tradeoffs of different data representations of our json data for an open data project, I thought it important to put this aspect of optimization into context.

Minification is the process by which javascript (json) text representation is minimized by removing all unnecessary characters from the source, like white-space. Sometimes it involves safe-rewiting variable names for shorter ones, etc.

The primary objective of minification is to reduce the time required to transport the javascript, by reducing its size.

But there is another factor which affects the transported scripts' size, usually to an even greater degree: compression.

When an http response is transported it may be compressed, this is subject to a negociation between the browser and server, but, long story short, almost all browsers support compression, and most servers (properly configured) do also. For example apache uses mod_deflate.

So while you definetely want to make use of both of these processes, the fact is that compression is usually more important to final transport size.

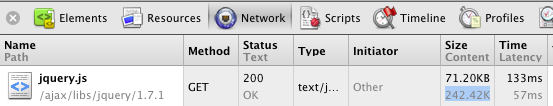

You can see these numbers (Size/Content) for yourself with the Firebug or Chrome DevTool Network tab.

Here is an example of the Google CDN Hosted jquery script

| uncompressed | compressed | |

| jquery-1.7.1.js | 242.42 KB | 71.20 KB |

| jquery-1.7.1.min.js | 91.67 KB | 32.79 KB |

| markers.json | 88.52 KB | 9.64 KB |

Furthermore these two processes are linked. So that when making choices, for example on readability, by using less spaces, or shorter variable names, it may not have the impact that you expect. For example, by looking at our geo-marker data json file, one might say, Hey, if we use tabs instead of our current spacing we would save almost almost 10KB (86.67-75.28 KB) on this file, but if we take compression into account, we see that we actually only save 0.1 KB.

| markers.json | uncompressed | compressed |

|---|---|---|

| as currently checked in | 86.67 KB | 9.57 KB |

| 8 spaces indentation | 86.67 KB | 9.57 KB |

| 4 spaces indentation | 80.16 KB | 9.52 KB |

| 2 spaces indentation | 76.90 KB | 9.49 KB |

| 1 tab indentation | 75.28 KB | 9.46 KB |

Conclusion, I think this is another case where premature optimization is perhaps not the best use of your effort, and that readability or maintainability concerns probably outweigh size-optimization.